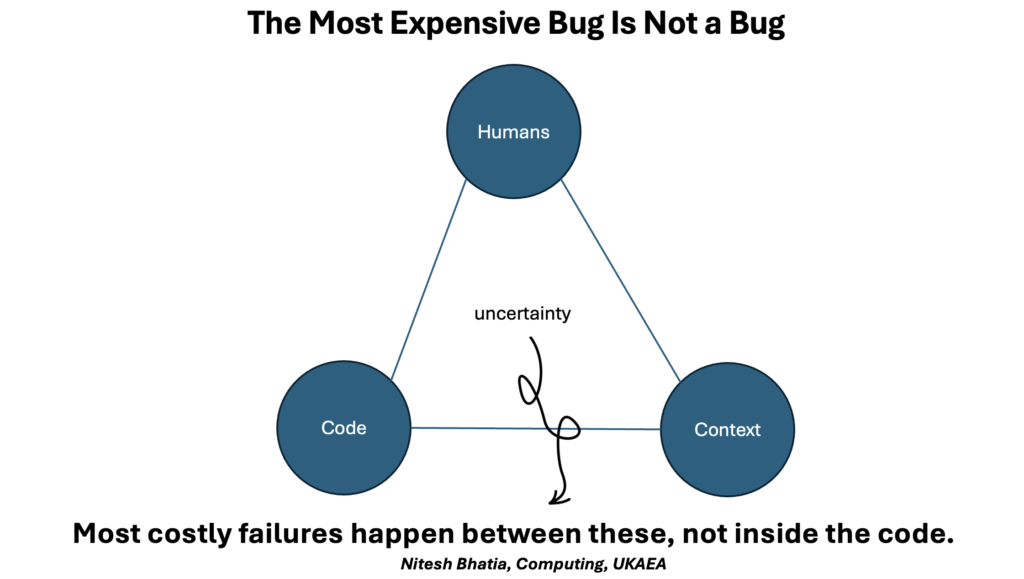

While preparing a short lightning talk for an internal software unconference, I kept asking myself a simple question: what is genuinely worth saying in two minutes? In the end, I titled the slide “The Most Expensive Bug Is Not a Bug.” That felt like the simplest way to capture the idea.

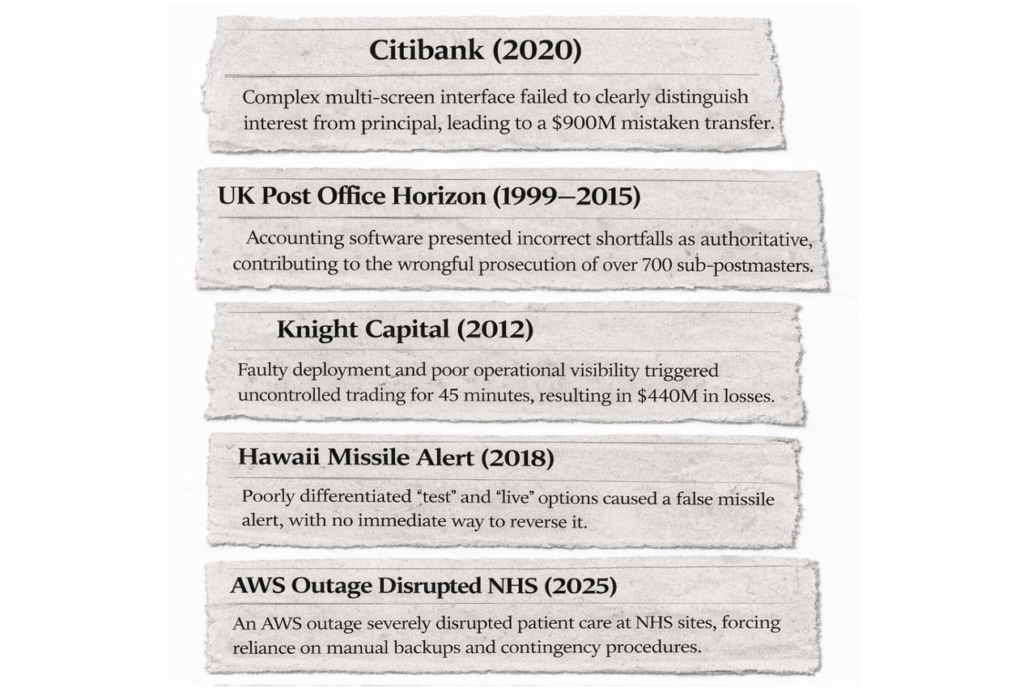

This led me to think about a phenomenon that extends far beyond software. In many well-known incidents, serious consequences emerged from the ways design choices guided interpretation, trust, and action. Systems behaved as expected, yet the way information was presented shaped how people understood the situation. Over time, small assumptions embedded in interfaces and interactions quietly influenced decisions with very real outcomes.

This is something we all experience every day without realising it. When we ask Siri, “What’s the weather today?”, we rarely think about uncertainty, data sources, or confidence levels. We receive a single answer, delivered calmly, and we plan our day around it. The interaction appears simple, yet it conceals layers of complexity and underlying assumptions.

The way we interact with physical systems is changing, too. In a modern car, pressing a button or speaking a command like “open the glovebox” triggers a chain of software-driven decisions. The experience is immediate and obvious. The control systems, sensors, and logic disappear from view. Trust sits entirely in the interface.

At a much larger scale, complex scientific and industrial systems also operate in a similar fashion. In fusion research, for instance, modern control rooms are used to integrate streams of diagnostics, models, and simulations to inform rapid, high-stakes decisions. The physics at play is highly complex, but what the operators see is a carefully curated set of views, dashboards, and alerts. The design of these views informs the speed of pattern recognition and the confidence of action. Clarity, context, and the communication of uncertainty become central to safe and effective operation.

Much of our broader digital world operates in the same way. Hyperscale data centres nowadays power our daily services, but most of us interact with them only through clean software interfaces. We move data, launch services, or conduct analysis without worrying about where computation occurs or how resilience is engineered. The abstraction is very powerful, and so is the responsibility that accompanies it.

As AI-enabled tools and agent-based systems become more widespread, interactions are becoming more fluid and more conversational. Screens are accompanied by copilot chat interfaces. Voice becomes a common way to access complex capabilities. Intent is expressed more casually, decisions happen more quickly, and the boundary between human judgment and machine output becomes less visible.

Seen through the lens of complex systems, trust does not appear all at once or as a fixed property. It develops gradually as assumptions are surfaced, uncertainty is communicated, and people learn how a system behaves over time. As systems gain greater autonomy, accountability spreads across humans, processes, and the technologies that connect them. From this perspective, design becomes one of the key forces shaping how that relationship evolves, influencing how trust forms, adapts, and is sustained.

Kant once argued that we experience the world through the structures that shape our understanding. While many might debate the details, I find the core idea compelling. Today, many of those structures are designed systems: interfaces, models, and increasingly AI-driven agents. The way they present information, express confidence, and frame choices influences how we reason and decide. A conversational system like ChatGPT for instance, can answer a question clearly and confidently, and that confidence alone can shape how much we trust the response, even when the underlying uncertainty remains invisible.

Reflecting on this, I’ve started to feel that design is becoming less about decoration and more about the scaffolding that holds up a house of trust. Whether it’s an interaction on a screen in a control room, a conversational agent on a phone, or a voice command in a car, the way systems communicate shapes how people think under uncertainty. Getting that right may matter more than any single technical breakthrough.

To be honest, I didn’t manage to say all of this in two minutes at the unconference. The topic felt worth continuing here. If this resonates, I’d be glad to continue the conversation. Feel free to connect, reach out or share your thoughts.